Tiled Deferred Lighting

/Current Implementation of Tiled Deferred Lighting

Image that shows the number of lights that are relevant to each tile

Final Image of point lights in the scene.

Programmer

In this blog I explain the method in which I handle descriptor tables in my game engine.

Current Implementation of Tiled Deferred Lighting

Image that shows the number of lights that are relevant to each tile

Final Image of point lights in the scene.

Current implementation of Geometry mipmapping in Black Osiris for landscape.

https://www.youtube.com/watch?v=bHlnEzXrbqQ&feature=youtu.be

This is the current implementation of Eric Bruneton's Atmospheric Scattering system with an embedded time of day system.

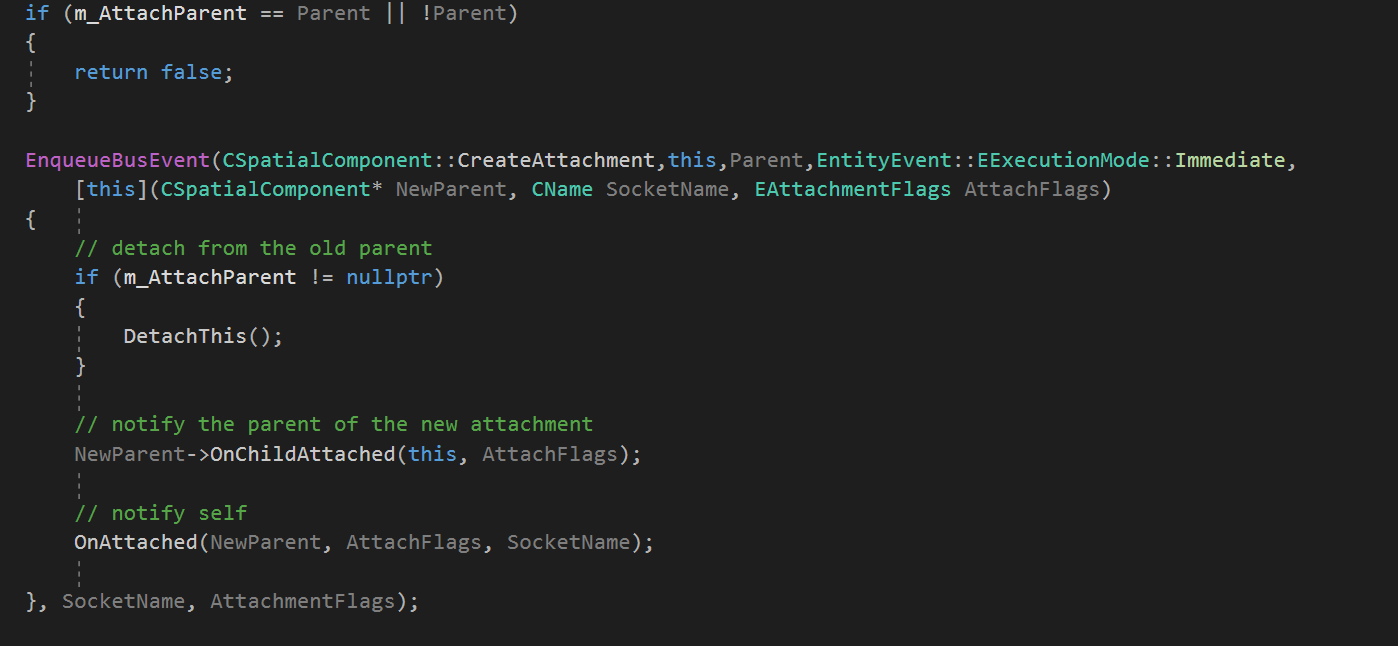

This is an extension of part 2 of the multithreading gameplay logic section. This entry is more about creating a better looking api that does not require as much start up time for messaging.

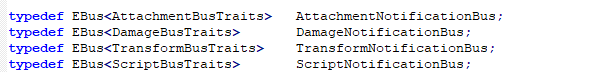

Old API Bus declaration

Old Bus API event dispatching.

Example of new API Design.

This approach removes the need for EBus and EBusTraits declarations using some macro magic. The user provides the sender , receiver, execution mode, message function, and message arguments.

In part 1 of Multithreading Gameplay Logic I talked about how one could use an entity component system to achieve concurrent game play logic. One of the major problems I had with this method was the inability to update a parent entity's components prior to the child entity. After some late night coding I came with a somewhat straight forward method.

In the presentation covering Multiprocessor Game Loops in Uncharted 2, Jason Gregory explains how they handled object hierarchy. They chose to use a simple system in which they maintain "update buckets". Each bucket is delegated to ticking a certain hierarchy of game objects suck as a bucket for vehicles; characters , weapons and so on. Because of the fact that there are a relatively few amount of components that require transform information , i.e the input component , I chose to extend the ComponentServiceProvider , now to be referred to as ComponentTickBus, class with an enum called "execution mode". There are two options for a tick bus' execution mode, Default and Hierarchical. The default mode works and schedules the same as the previous method, but the hierarchical mode is where things get interesting.

The entity world is split into multiple layers known as TickTaskLevels. Each of these TickTaskLevels represents a single bucket and contain a ComponentTickBus instance for all available component types. After an entity creates a new attachment , or is detached, it determines it's new TickTaskLevel. All Components who's tickbus' execution mode is hierarchical , removes themselves from the old tick task level, and registers itself with the new tick task level. As an added detail, component tick buses who's execution mode is default are auto registered into a "global tick task level".

All of the component's now follow the same graph based execution order defined in Part 1, but are now done on a per TickTaskLevel basis, with level i +1 waiting on all of the tick tasks in level i.

So today I'll be going over my second approach to multithreading gameplay logic. This approach is similar to the actor model , in that entities are capable of updating their internal state, but to update other entities, they must send messages. An extension that many engines add to the actor model is a dependency system. The dependency system helps with task batching in the multithreading phase, and determines which messages can be sent immediately or deferred until the end of the frame.

In my approach, there are five important classes. EBusTraits, EBus, CEntityBusPeer, CActor, CActorComponent. The EBusTrait's responsibility is to provide custom configurations on a per bus basis. EBus is the specific bus and handles dispatch logic for a message. Entity Bus Peers are equivalent to the actors in the actor model, in that they send and receive messages while CActor and CActorComponents are similar to Actors and Actor Components in Unreal Engine 4, and are derived from CEntityBusPeer.

As previously stated, I have incorporated a dependency system to this approach. Actors/ActorComponents that are likely to access each other during a tick add themselves to a dependency list. In the beginning of the frame, All Actors and Components that are dependent on each other are put into a single job and sent to update on the same thread. This batching approach is used in (1) and (2).

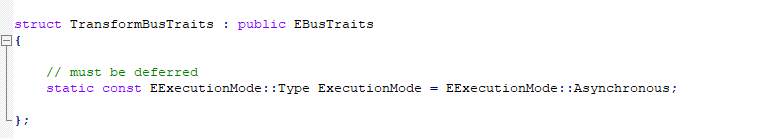

Definition of the transform bus traits. Messages sent on this bus must be dispatched during the "Asynchronous" phase. (as in they must be executed at the end of the frame)

Definitions of specific bus'.

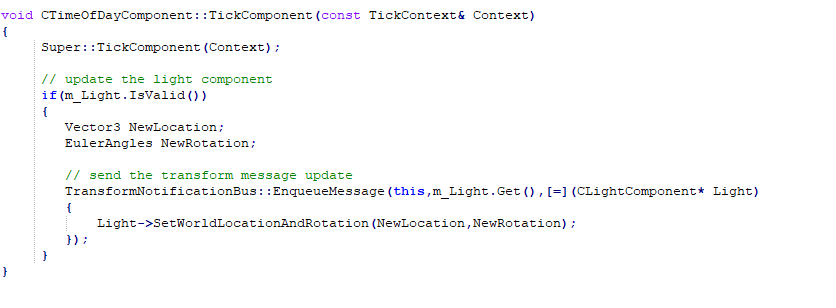

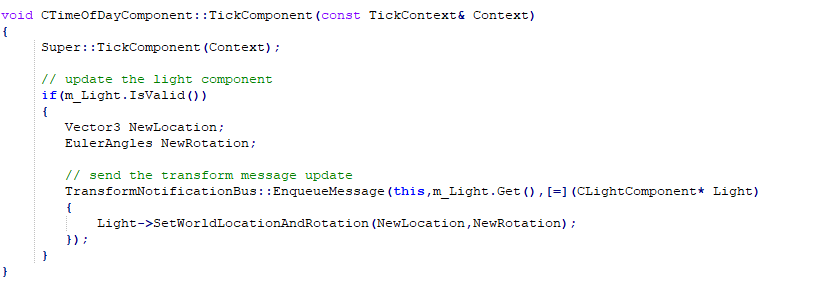

Example of sending a message to update a light component on the transform notification bus.

As one can see , I chose to take a different approach when it came to the messaging system. Many messaging systems choose to rely on either an uber-message or a derived message class approach. Seeing as there might be hundreds , or thousands, of messages, I chose to use lambdas.

1) Actors/Components are completely decoupled , allowing for far more efficient multithreading than the previous approach.

2) Easier to transfer old code bases to this approach by creating a dependency or converting any Actor/Component manipulation to an asynchronous message.

3) Allows parent entities / components to be updated prior to children.

1) Requires some external systems to become aware of the new multithreaded architecture. For example, any thread can be processing a task that can be reading or writing from the physics world. To compensate for this, some approaches choose to buffer any physics changes until a certain point, meaning that all ray casts will be running against an old state.

2) Because Actors/Components are updated one at a time, it becomes impossible to leverage any optimizations that could be done through a batching approach.

So I've been racking my brain about the best way to implement multithreaded gameplay logic, and I've come to two main solutions. The first solution is a message based approach in which entities, and components, can update their own logic, but must pass messages to other entities/components to update their state. A few examples of this are in (1), (2), (3). The second approach, the one which I will be discussing today , relies on an entity component system methodology to multithreading.

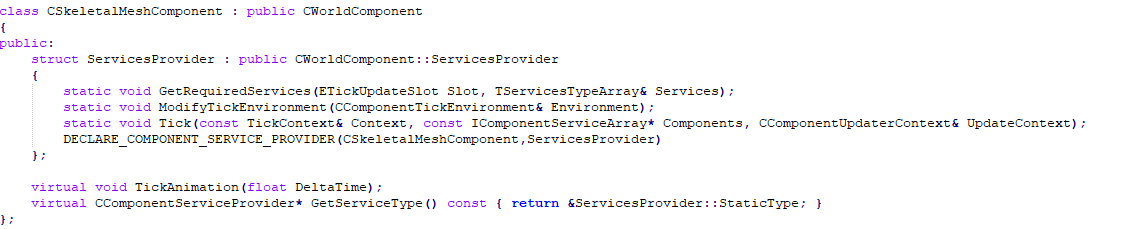

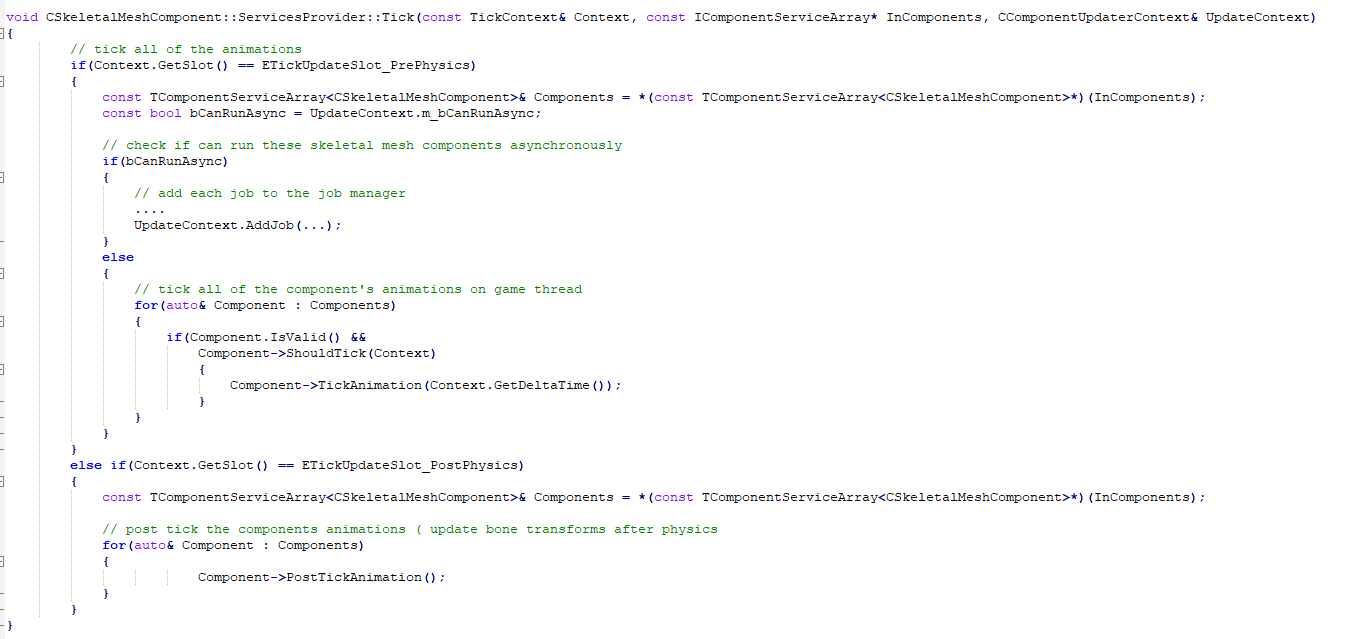

My ECS updating strategy is split between "components" and "service providers". An example of a component is a skeletalmeshcomponent , which handles animating skinned meshes, and an input component, which handles dispatching input events. The responsibility of a "service provider" is to determine the data access policy and the updating logic of a specific component type.

Example of a Skeletal Mesh Component and it's relevant services provider.

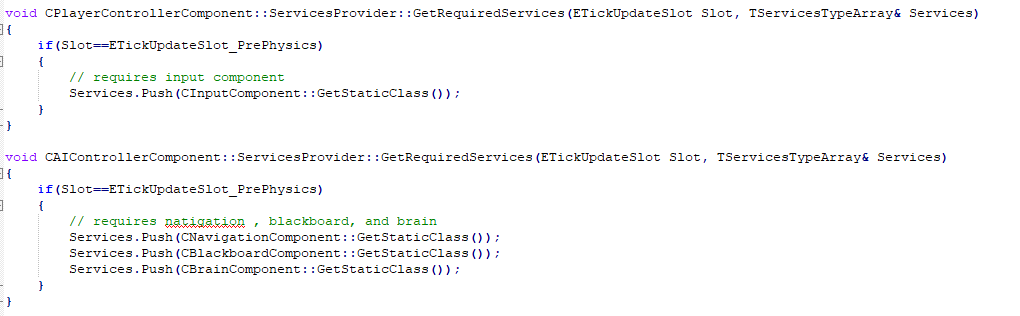

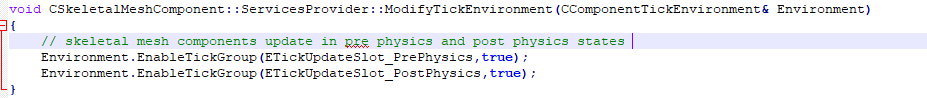

The services provider interface contains three functions; "GetRequiredServices" , "ModifyTickEnvironment", and "Tick". The first function's responsibility is to determine the types of data that will be accessed during a tick phase. For example, a PlayerControllerComponent's responsibility is to update the player's pawn from given input, so the services provider will state that it requires the CInputComponent's service provider to updated first. The second function states which tick phase the services provider supports, i.e pre-physics , post-physics,...etc. The last function handles gameplay logic.

Example of GetRequiredServices for ai controller and player controller component

Example of modify tick environment for skeletal mesh components

Example for tick logic for skeletal mesh components.

So now onto the pros and cons of this approach.

1) Makes asynchronous processing ridiculously simple.

2) Data access patterns are explicit

3) Batched processing gives room for Data Oriented optimizations

1) Incapable of handling update order on a per entity basis (i.e parent and child)

2) Increased number of sync points (between each component type) reduce chances of full CPU optimization.

It's difficult for me to determine if the pros outweigh the cons of this approach. It seems that most game engines (openly sourced) ignore the importance of updating parent entities prior to their children. While unreal engine handles it and Jason Gregory explains the importance of the approach , a simple google search will show that many have issues with Unity choosing to ignore execution order. For now , I believe that I will be keeping this on the backburner until I learn how other's handle this glaring issue with the ECS approach.

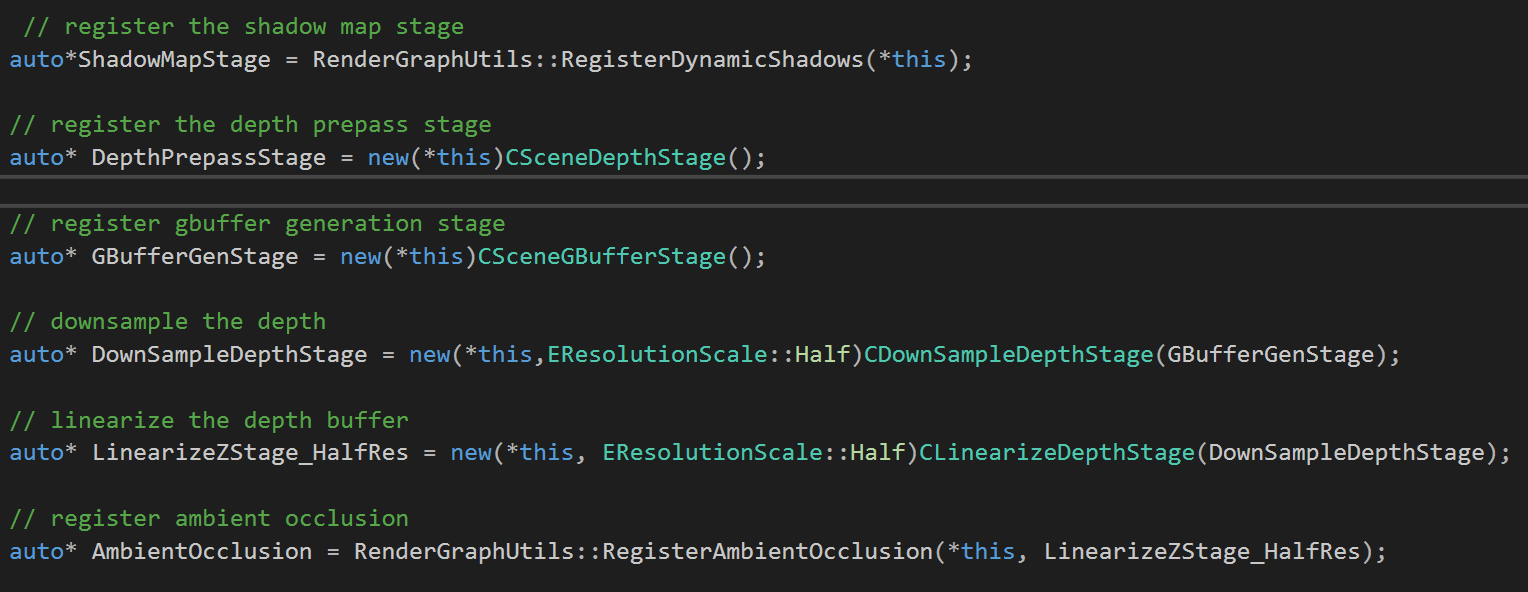

Recently I've been looking at a better way to handle the renderer architecture of the engine. After looking at Frostbite's implementation I decided to go with a render graph approach. The render graph architecture consists of three main classes; CRenderGraph ( a transient graph generated every frame that contains the render target for resolve and all of the player views) , and CPipelineStage (represents a single rendering pass) , and IPooledRenderTarget ( a render target from the pool).

The render graph has three phases; stage generation , resource compilation , and stage execution.

In this phase, the render graph determines the stages that will be needed for the frame. For example; in debug mode the user will want to render debug geometry, so the "CDebugDrawStage" is registered with the frame graph; and if running in deferred shading mode, the CSceneGBufferStage and CTiledLightingStages are registered.

Example of Stage generation for deferred lighting render graph.

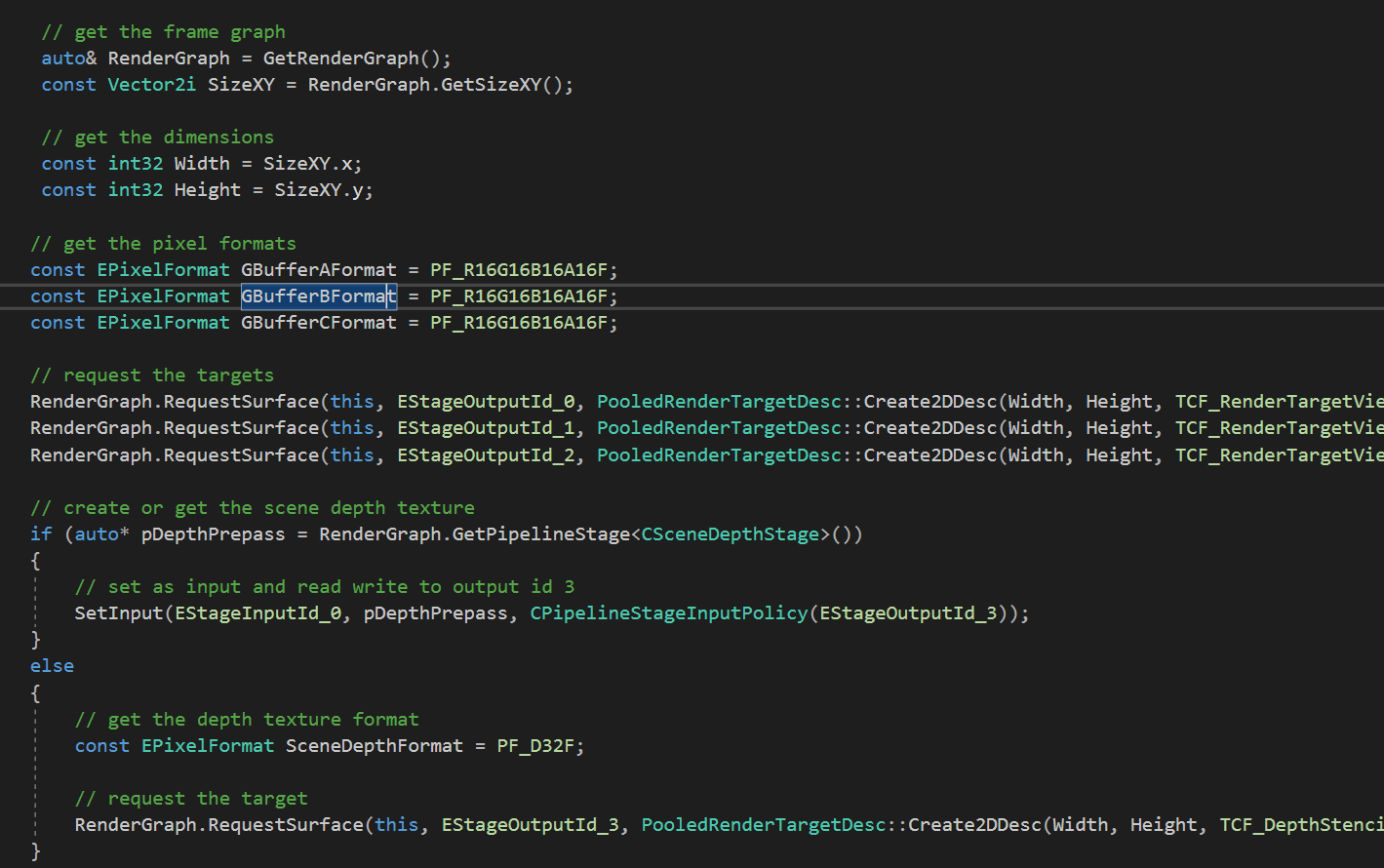

In the resource compilation phase, render stages register with the render graph the temporary resources they require. These resources can be structured buffers, render targets, etc. After all of the resources have been collected, the render graph determines the ordering of the stages and the point for the most optimum resource transitions (for vulkan and directx12), and render target resolves.

Example of compile resources phase for CSceneGBufferStage. This stage detects if a depth prepass stage is registered with the graph. If the stage is registered, it sets the stage's output as this stage's input, and as a "read write" resource with the output id of "EStageOutputId_3". If the CSceneDepthStage does not exist, the gbuffer stage creates it's own depth target.

This is the final stage of the render graph. In this stage the render graph traverses the pipeline stages in the order generated during the "Compile Resources" stage. If the next resource requires a render target, a render target is generated from the pooled render target and set to the proper output slot. All resource transitions and render target resolves are automatically handled prior to the stage running.

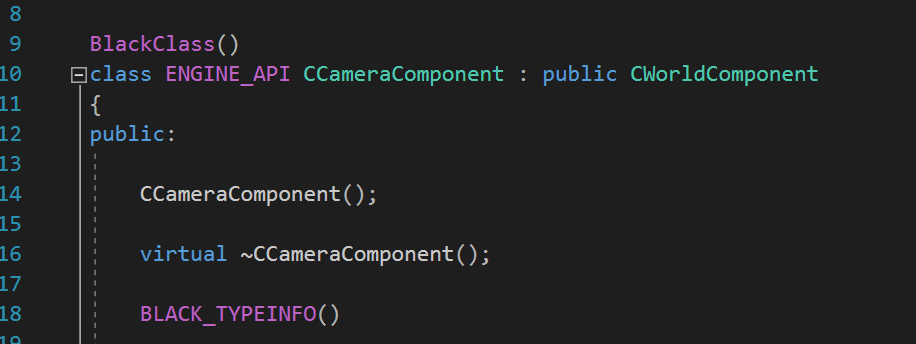

Currently I've been working on expanding my reflection system to also include code generation. Below are some examples of the source c++ code and the generated c# code.

Declaration of Camera Component Class.

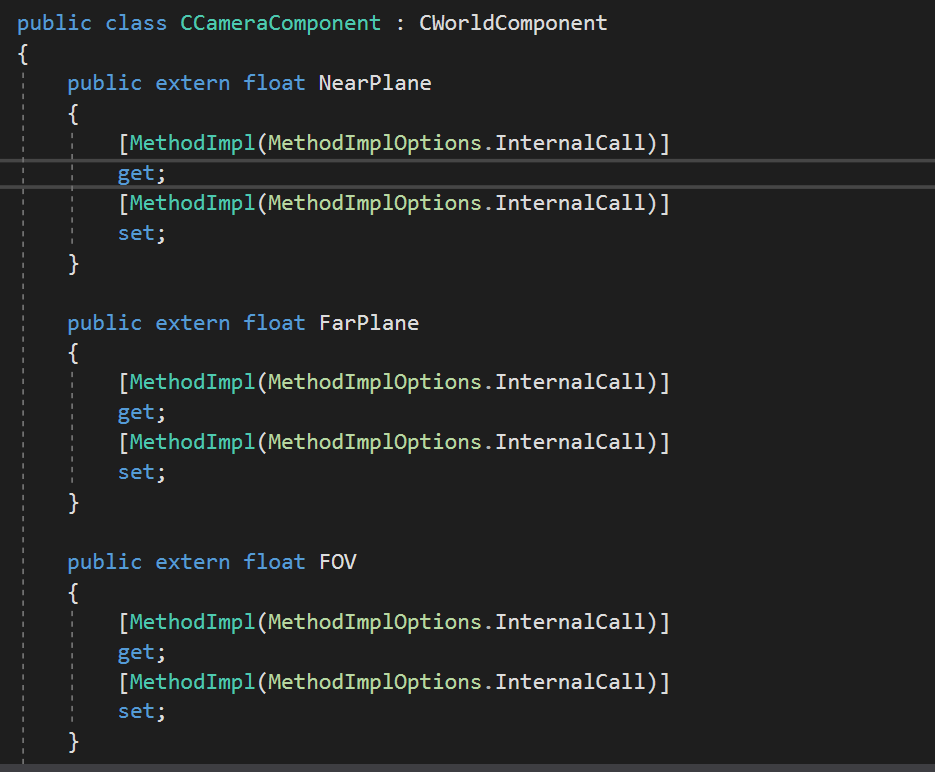

A Few of the Camera component's member variables.

The generated C# code used to access the member variables from script code.

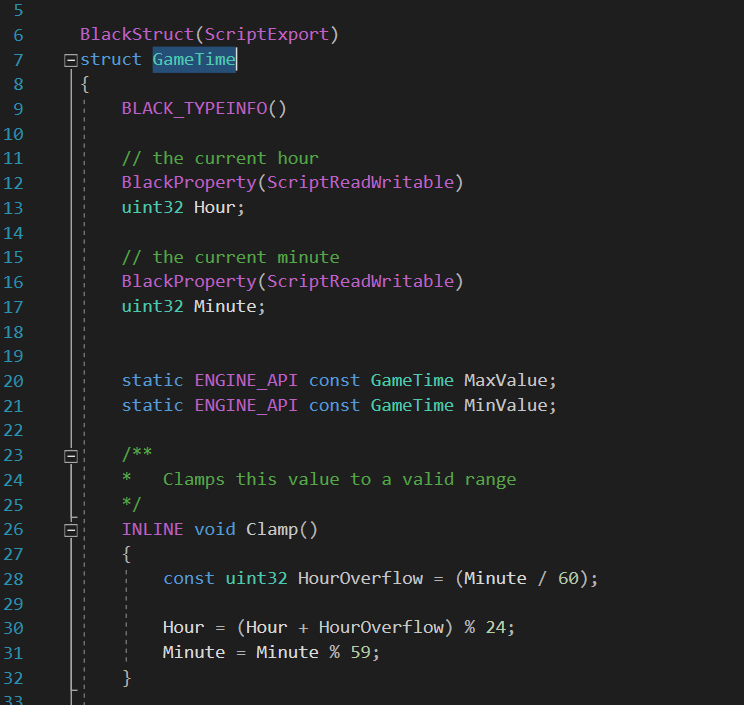

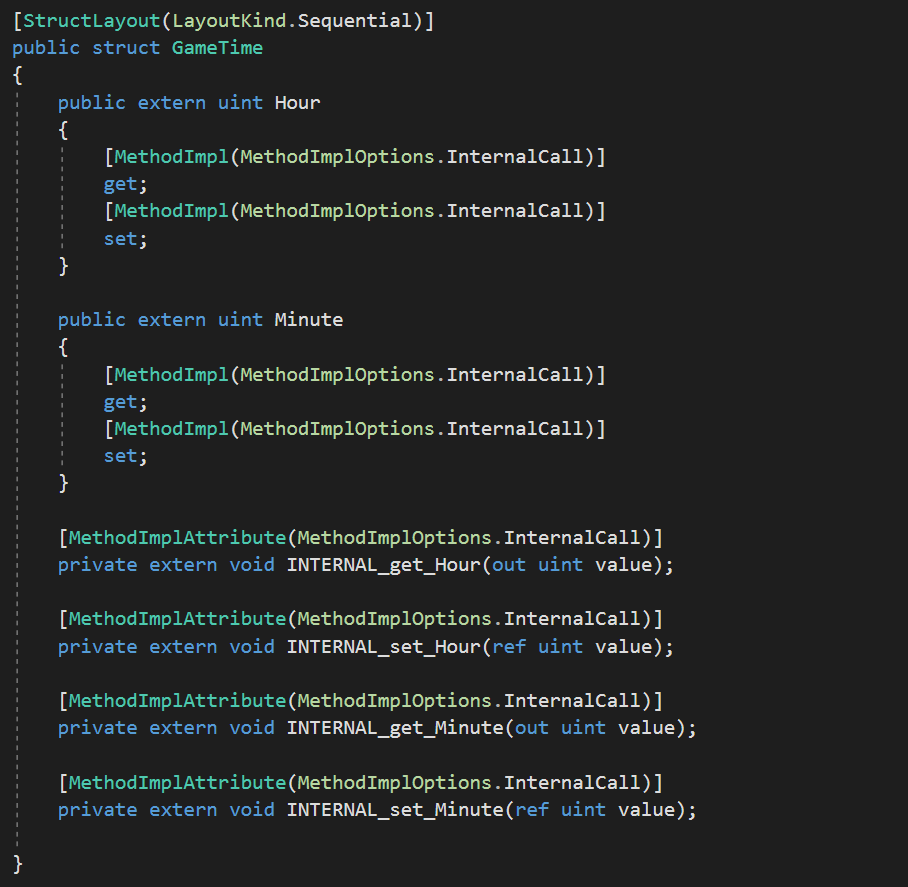

A Struct declared in c++ that will be exported to the C#.

The generated C# struct for "GameTime".

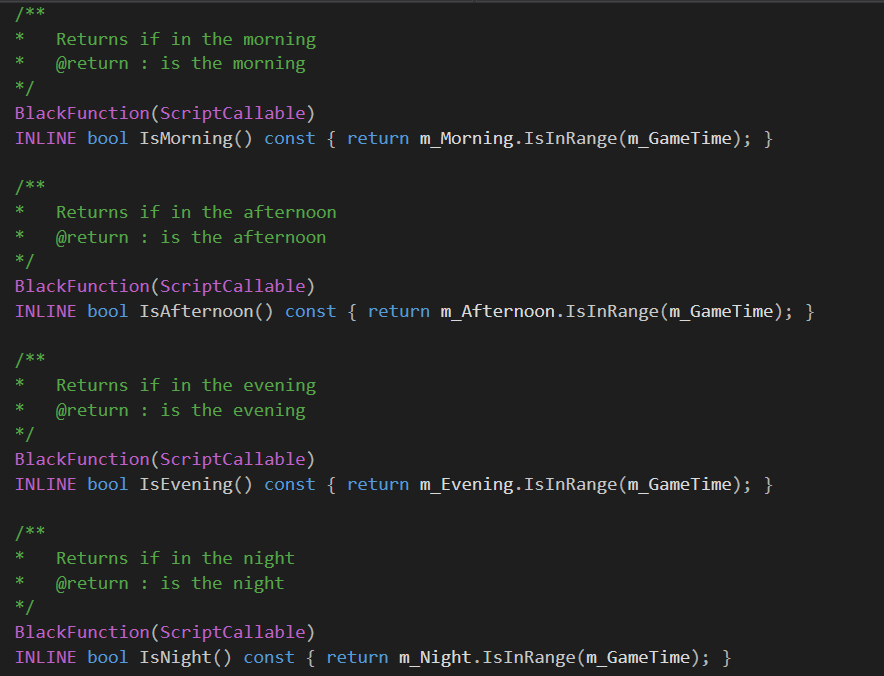

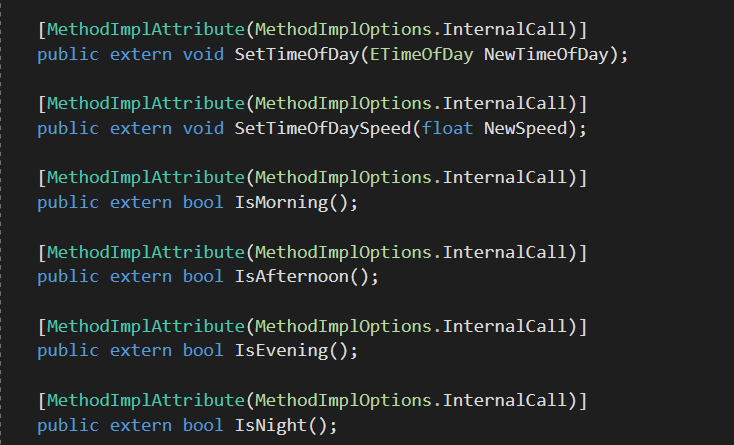

Functions declared in C++ that will be exported and callable from C#.

The generated C# code for "CTimeOfDayComponent".

Currently the code generation and reflection system supports four types; Classes, Structs, functions, and enums. Classes that require reflection will be prefixed with the "BlackClass" macro, while structs have "BlackStruct". Both of these types must contain a "BLACK_TYPEINFO" macro for the generated typeinfo. Enums are prefixed with "BlackEnum", Functions "BlackFunction" , and lastly properties "BlackProperty". Any Class, Struct, or Enum that must be exported to script code must contain the "ScriptExport" meta data. Properties and functions are more diverse in their options. Properties contain three types of meta data for script export. They can be readable "ScriptReadOnly" , writable "ScriptWriteOnly", or both "ScriptReadWritable". Functions may be callable from code, using the meta "ScriptCallable" or implementable in script "ScriptImplementable".

After reading through the implementation of the shader system used in Destiny , in which they use bytecode for shader parameter setting, I thought to myself, why don't I expand upon this idea to handle descriptor sets? In this blog I will go through a few of the steps I took to make this into a reality.

Resource Sets

The Black Osiris Engine has a concept of "resource sets". A resource set is a combination of shader parameters , textures, and buffer resources. An example of a resource set is a grouping of albedo and normal textures for a mesh, or a lighting buffer for a tiled shading pass. For the purposes of efficiency, Resource Sets are split based on update frequency. Currently there are four resource slots; EResourceLayoutSlot_PerInstance, EResourceLayoutSlot_PerInstanceCustomData, EResourceLayoutSlot_PerMaterial, and EResourceLayoutSlot_PerPass.

To make resource set creation and management easier, each resource set is accompanied by a C++ class.

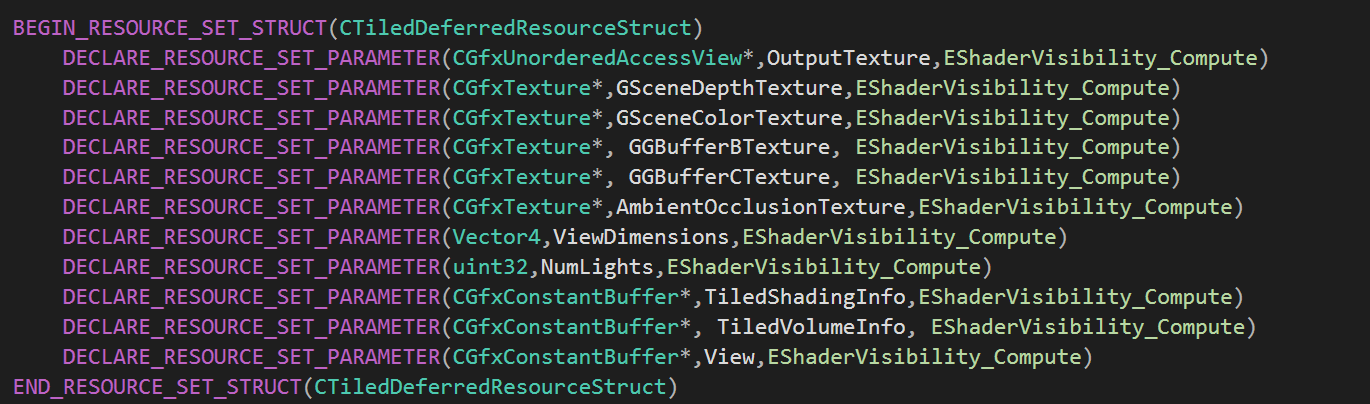

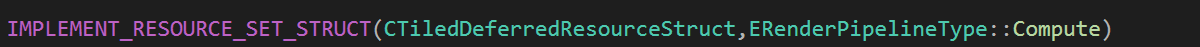

Example of a Resource set used for the tiled deferred shading stage.

Function that registers the resource set struct with the global system, and states that it is used for compute shader stages.

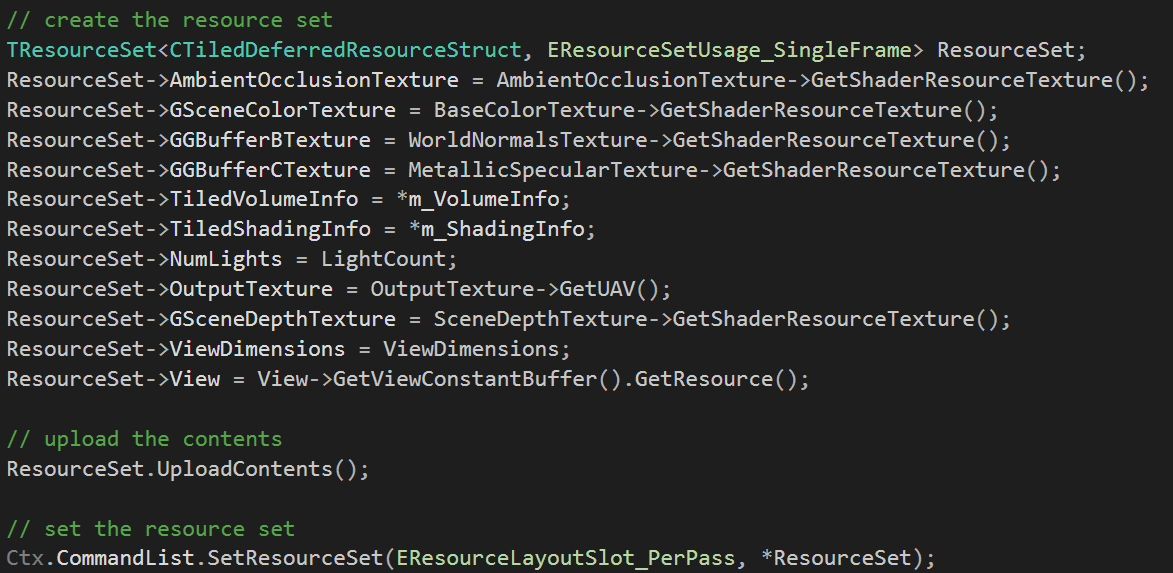

This macro definition generates meta information that will be used to generate bytecode explained later. The user provides the name of the resource set and the parameters (typename,name,shader visibility,arraysize). Below is an example of the creation and setting of this resource set.

Example of creation and setting of a resource set

In this example the user creates a resource set with the usage of "SingleFrame". In the DirectX12 implementation, resource sets with the usage of "SingleFrame" are allocated from the per frame descriptor heaps , while DirectX11 resource sets allocate data from a per frame stack allocator. After creating the resource set , the user sets the shader parameters and then proceeds to "compile" the resource set through the function "UploadContents". The user then sets this resource set in the commandlist at the "EResourceLayoutSlot_PerPass" binding location.

API Abstraction : D3D11

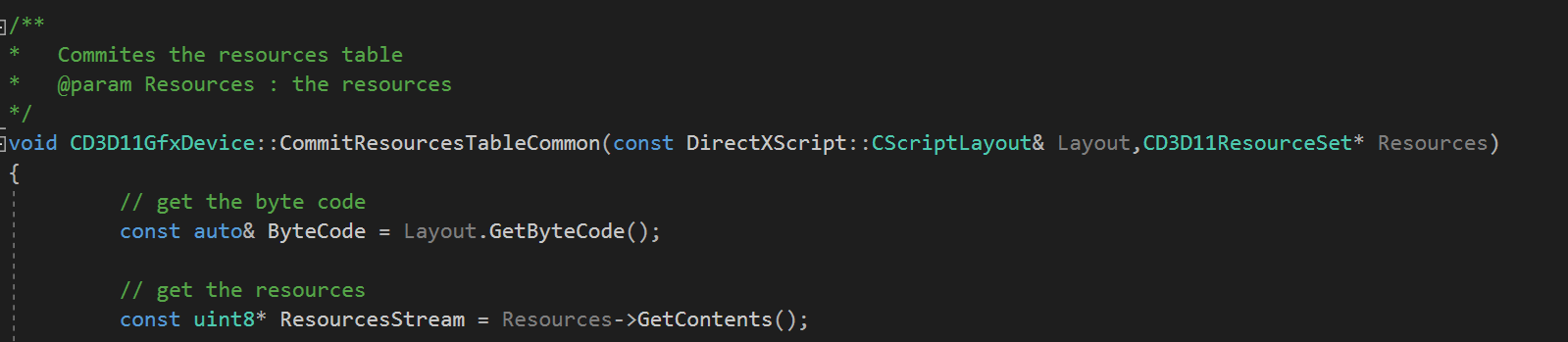

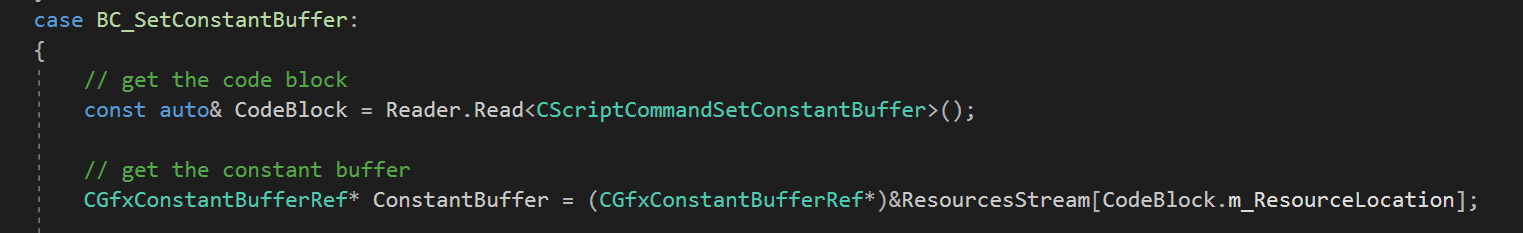

As said previously, I've chosen to use a bytecode implementation for resource sets in the api backends. The Directx 11 implementation has a concept known as a "CScriptLayout" which has a 1:1 relation to resource sets and a 1:1 relation to pipeline state objects. The CScriptLayout contains a packed buffer of bytecode data that dictates where each shader parameter should be bound to. Prior to issuing a draw call, the directx backend checks to see if any of the currently bound resource sets are dirty , i.e have been updated since the last draw call, and calls the function "CommitResourceTablesCommon".

This function traverses the compiled bytecode for each resource set and gets the proper resources from the "ResourceStream" , the opaque byte data that holds all of the resources set prior to calling "UploadContents".

Books I'm reading :